I’ve recently been tasked to optimize a search result page for a customer, as they have a “feeling” it’s not working out. For the record, I did not create the existing layout; if I did I would not write this post :)

The lesson to be learned is: Keep your UI clean, remove the clutter, and stick to science

I’ve long learned long ago that UX is very subjective and often emotionally loaded, and I do now want to force my views onto others without some backing. First off I got hold of the web server logs and wrote a small utility to parse out all the search queries going back a couple of months. By looking at the query strings I could easily pinpoint where in the UI people were clicking, thus providing me documented metrics on what parts of the current UI works, and which ones doesn’t.

Note: There is no out-of-the-box way of measuring where a user click in SP2010 or SP2013 search, so you have to figure out a way to do this yourself by either adding script and custom logging to the page, or parse the IIS logs as I did.

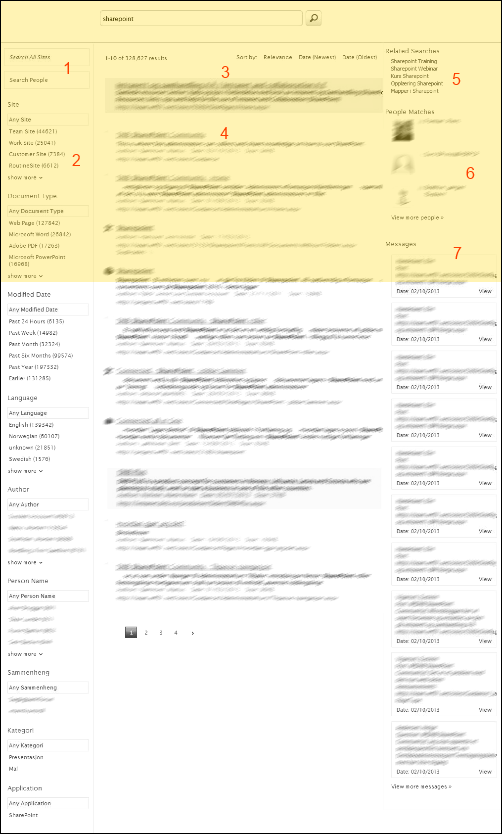

The below screenshot is what the result page looks like today, where the yellow area is what is visible on a typical laptop screen (it’s actually even less as I’ve cut away the SharePoint menus. Studies over the years have shown that information below “the fold” is hardly interacted with, and we have tons of information outside the viewable screen estate on this page.

The numbers on the screen illustrate the following:

- Entity navigator (All Sites, People, Messages) - This navigator resides beside the search box by default, but has been moved top left. Typically this navigator chooses information silo on a high level.

- Long list of refiners

- Best bets

- Search results

- Related searches

- People matches

- Message matches (social)

Here are the metrics broken down by usage:

- 6% of all queries came from clicking on related queries, which is an indication that people at least are getting hints to improve their queries

- 2% of all queries were “Page 2” queries which is interesting, as people are actually moving on to find what they are looking for

- 0,3% of the queries were using the Document Type refiner, which was the top refiner being used. Not a whole lot! And I know from previous research that people want this one, and many people use it, or say they would like to anyways.

This more or less means, NONE of the refiners are being used and the messages list in the right hand corner is noise.

15% of all queries were people searches, but I had no way of knowing if they came from the front page of the intranet or by people clicking any of the “see more people” links in 1 or 6.

With my metrics in hand I have created a proposed solution shown below, which I hope to put into production and measure in a couple of months time how it works out.

- I have moved the information silo (tabs) back up below the search box as it’s more obvious to the user, the intention being to increase the usage. I have also added search hit numbers to each tab to give a visual indication of the amount of information you can expect to find.

- Most of the refiners have been removed, but Site and Date has been kept. They now have a more prominent place as they are moved higher up, and both are in the screen real estate, “above the fold”.

- By experience people often navigate on the type of document they are looking for. Here I have moved the Document Type navigator above the search result, and represented it by the icons for the most used file formats; Word, Excel, PowerPoint, Acrobat Reader. Visually a lot easier to spot, using well-known icons to filter formats on and off.

- I have removed the social feed results, which is covered with a tab at 1), making the right column a lot cleaner.

We’re also working on some other concepts which we hope will improve findability:

- filter away draft versions of documents which is currently indexed

- filter by documents “close to me”

- changing the ranking algorithm based on user context

To sum it up, keep your result page clean and tidy and use navigation concepts well-know to the user such as tabs (Google and Bing can’t be wrong right?). Also, do measurements of what works and what doesn’t. This allows you to make qualitative decisions when changing your UI, and not rely on committees, hormones and individual taste.