Here’s the presentation Harald Fianbakken and I gave at the Norwegian SharePoint Community meeting June 17th, 2013.

Basically a talk where we ranted on our experiences with 2013, highlighting the issues we ran into, and what we really love.

Here’s a small narrative per slide to give it all some more context.

Slide 6-8: The quality management system is an external system which stores it’s data as static HTML files. Along these files are corresponding image files when the artifact is a process diagram. The files are imported with a custom application which parses the <meta> tags of the HTML files, and stores the parsed data as publishing pages with custom columns to hold the metadata inside of SharePoint. The page library have several content types, and the right one is chosen on import depending on the parsed data. The images are stored in an image library.

The HTML files also contains image maps, which we parse and store the coordinates in order to re-create the navigation experience later inside of SharePoint.

Some of the metadata are stored as Managed Metadata, and a taxonomy is built upon import.

Slide 9: We auto-generate Content Types and lists based on reflection on annotated Poco’s. The poco’s are annotated using http://nuget.org/packages/Fianbakken.SharePointations/. Puzzlepart also has a framework which allows the use of Linq queries on the CT’s/Lists, instead of using CAML. Sort of like SP Metal, but you start with the poco, not the other way around.

Slide 11-13: The solution has a part which is an “Improvement Potential” log. When someone reports an improvement a workflow is started. We encountered multiple issues when developing this, mainly on the test server. The root cause was most of the time missing user profiles and missing e-mail addresses.

Slide 14: There is a bug in Workflows as of now which comes into play if you add custom task outcomes to the task form. Meaning, you want more than just Approve/Reject. If you add more, then the outcome is always “Approve”. You can however retrieve the real value in your workflow, and then act on it as a workaround. This took us one week to figure out (see the blogs referenced at the end slide for more info).

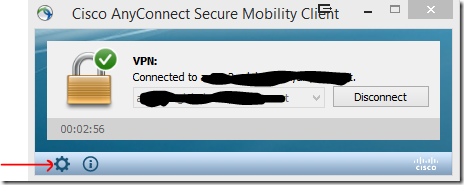

Slide 15: If you don’t click “Publish” before exporting a workflow you will get an error upon feature activation. Also, you have to retract/redploy your workflow if you re-export as the WSP’s get new id’s. This is an issue if you develop the workflow on one server and want to move it to another.

Slide 18-19: Excel rest is a good way to get graphs/named entities from within Excel files without having an Enterprise license.

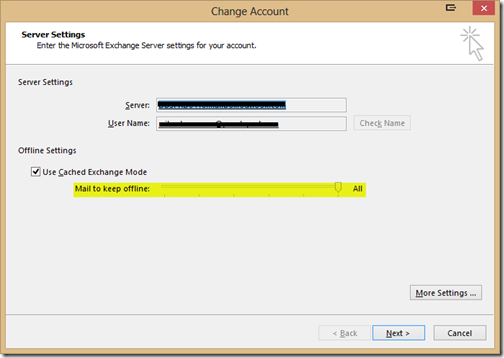

Slide 20-21: See http://techmikael.blogspot.se/2013/04/how-to-enable-page-previews-in.html for more information.

Slide 23-24: By using the SP2013 social api, we can follow or bookmark publishing pages in SharePoint, allowing users to have favorites.

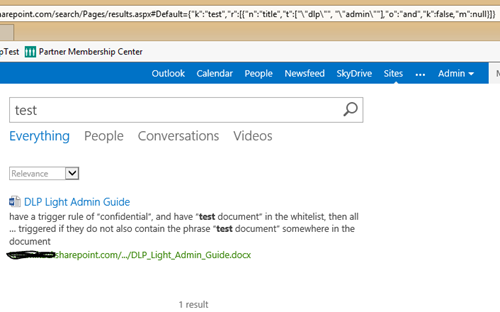

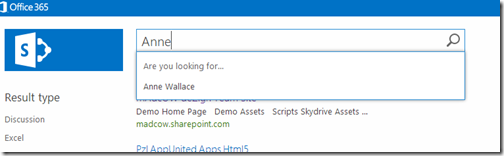

Slide 26-34: We use custom display templates, result sources, query rules and display blocks in order to create a sort of 360-view for processes. Searching for a process will show related improvements and related documents to a specific process.

The issues we encountered where related to pulling back managed properties for certain content types, and getting the display templates to trigger correctly. It’s all a bit buggy at the moment, but I’ve been told there are fixes coming in later CU’s.

What seems to work the best is to create managed properties on the SSA, stay away from the auto-generated ones, and have one result block per query rule, even though the rules are the same.

Slide 36-40: By using custom properties on terms, we created a configuration option for display forms. The taxonomy states which fields should be shown in our dynamic form based on values you choose, and which fields should show in new/edit mode. Basically we used the terms store as a way to configure an input form. The other choice would have been to create multiple forms to cover all scenarios. But the requirements kept changing, and this gave us flexibility and ease of configuration.

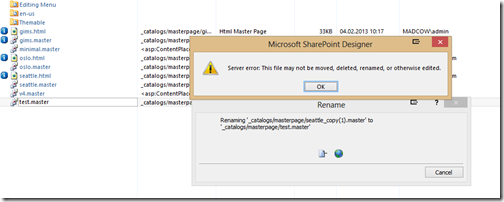

Slide 42-45: Be sure to keep people who know HTML and know SharePoint HTML at hand when implementing a custom design. It saves time!

)