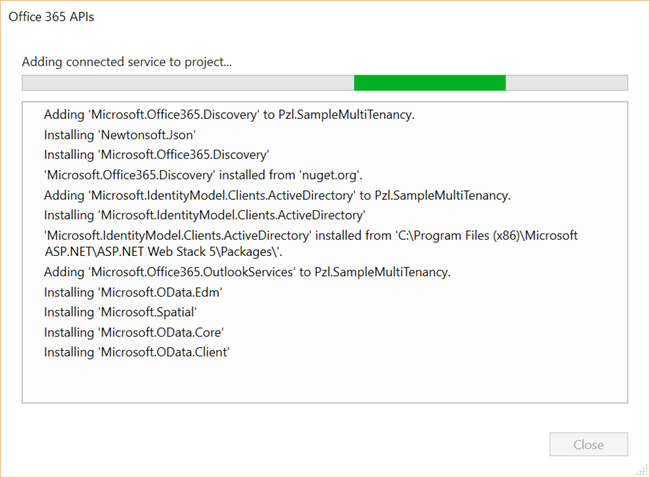

Once the delegate permission for send e-mails has been set up, and the nuget package for

OutlookServicesClient is in place, all that’s missing is writing the code to send an e-mail.

Add code to authenticate against Exchange and patching AdalTokenCache.cs

The original code added to

StartupAuth.cs takes care of the access token from the

https://graph.windows.net resource to query AAD. When performing e-mail actions an access token from

https://outlook.office365.com/ is needed as well.

I make sure I have constants for both resources at the top of the file.

private ApplicationDbContext db = new ApplicationDbContext();

// This is the resource ID of the AAD Graph API. We'll need this to request a token to call the Graph API.

private static string graphResourceId = "https://graph.windows.net";

// This is the resource ID of the Outlook API.

private static string outlookResourceId = "https://outlook.office365.com/";

and in the AuthorizationCodeReceived delegate I add a registration to get a token from the outlook resource as well as the Azure AD graph one.

AuthenticationContext authContext = new AuthenticationContext(aadInstance + tenantID, new ADALTokenCache(signedInUserID));

AuthenticationResult result = authContext.AcquireTokenByAuthorizationCode(

code, new Uri(HttpContext.Current.Request.Url.GetLeftPart(UriPartial.Path)), credential, graphResourceId);

AuthenticationResult result2 = authContext.AcquireTokenByAuthorizationCode(

code, new Uri(HttpContext.Current.Request.Url.GetLeftPart(UriPartial.Path)), credential, outlookResourceId);

This means that for every resource end-point you want to query; you add a new token registration in

StartupAuth.cs. Took me a few tries to figure that one out, and how it all fit together.

Refactor UserInfo.cs to get tokens from multiple resources

If you look at the end of

UserInfo.cs, there is a function named

GetTokenForApplication which uses your token cache to silently get you a valid authorization token for the operation you are performing. The flow for Azure AD Graph is the same as for Exchange.

I add a new file named

TokenHelper.cs to my project with a generic class to be re-used when acquiring tokens.

using System;

using System.Configuration;

using System.Security.Claims;

using System.Threading.Tasks;

using Microsoft.IdentityModel.Clients.ActiveDirectory;

using Pzl.SampleMultiTenancy.Models;

namespace Pzl.SampleMultiTenancy

{

public static class TokenHelper

{

public const string GraphResourceId = "https://graph.windows.net";

public const string UnifiedResourceId = "https://graph.microsoft.com";

public const string OutlookResourceId = "https://outlook.office365.com/";

private static readonly string aadInstance = ConfigurationManager.AppSettings["ida:AADInstance"];

private static readonly string clientId = ConfigurationManager.AppSettings["ida:ClientId"];

private static readonly string appKey = ConfigurationManager.AppSettings["ida:ClientSecret"];

public static async Task<string> GetTokenForApplicationSilent(string resourceId)

{

var signedInUserID = ClaimsPrincipal.Current.FindFirst(ClaimTypes.NameIdentifier).Value;

var tenantID = ClaimsPrincipal.Current.FindFirst("http://schemas.microsoft.com/identity/claims/tenantid").Value;

var userObjectID = ClaimsPrincipal.Current.FindFirst("http://schemas.microsoft.com/identity/claims/objectidentifier").Value;

var authenticationContext = new AuthenticationContext(aadInstance + tenantID, new ADALTokenCache(signedInUserID));

try

{

// get a token for the Graph without triggering any user interaction (from the cache, via multi-resource refresh token, etc)

var clientcred = new ClientCredential(clientId, appKey);

// initialize AuthenticationContext with the token cache of the currently signed in user, as kept in the app's EF DB

var authenticationResult =

await

authenticationContext.AcquireTokenSilentAsync(resourceId, clientcred,

new UserIdentifier(userObjectID, UserIdentifierType.UniqueId));

return authenticationResult.AccessToken;

}

catch (AggregateException e)

{

foreach (Exception inner in e.InnerExceptions)

{

if (!(inner is AdalException)) continue;

if (((AdalException)inner).ErrorCode == AdalError.FailedToAcquireTokenSilently)

{

authenticationContext.TokenCache.Clear();

}

}

throw e.InnerException;

}

catch (AdalException exception)

{

if (exception.ErrorCode == AdalError.FailedToAcquireTokenSilently)

{

authenticationContext.TokenCache.Clear();

throw;

}

return null;

}

}

}

}

In

UserInfo.cs swith out

ActiveDirectoryClient activeDirectoryClient =

new ActiveDirectoryClient(serviceRoot, async () => await GetTokenForApplication());

with

ActiveDirectoryClient activeDirectoryClient =

new ActiveDirectoryClient(serviceRoot, async () =>

await TokenHelper.GetTokenForApplicationSilent(TokenHelper.GraphResourceId));

and remove the

GetTokenForApplication method as it’s no longer needed.

Patching faulty logic in AdalTokenCache.cs

Each individual user accessing the application will have a cache entry, where each cache entry stores a token for all resources registered. In my case for the AAD Graph API and Outlook API.

The “bug” in the cache logic resides in the

AfterAccessNotification method.

void AfterAccessNotification(TokenCacheNotificationArgs args)

{

// if state changed

if (this.HasStateChanged)

{

Cache = new UserTokenCache

{

webUserUniqueId = userId,

cacheBits = MachineKey.Protect(this.Serialize(), "ADALCache"),

LastWrite = DateTime.Now

};

// update the DB and the lastwrite

db.Entry(Cache).State = Cache.UserTokenCacheId == 0 ? EntityState.Added : EntityState.Modified;

db.SaveChanges();

this.HasStateChanged = false;

}

}

Every time the cache is updated with new access tokens, a new cache entry is saved in the database as

Cache.UserTokenCacheId will always be 0. Had the original code author used

SingleOrDefault instead of

FirstOrDefault in the different code parts then this bug would have been caught pretty quick.

As I don’t like to store more data than needed, and in theory can get random cache tokens back, I replace the above code with:

void AfterAccessNotification(TokenCacheNotificationArgs args)

{

// if state changed

if (this.HasStateChanged)

{

Cache = Cache ?? new UserTokenCache();

Cache.webUserUniqueId = userId;

Cache.cacheBits = MachineKey.Protect(this.Serialize(), "ADALCache");

Cache.LastWrite = DateTime.Now;

// update the DB and the lastwrite

db.Entry(Cache).State = Cache.UserTokenCacheId == 0 ? EntityState.Added : EntityState.Modified;

db.SaveChanges();

this.HasStateChanged = false;

}

}

My updated code checks if the cache is already loaded from the database and if so, updates the row. If it is indeed a new user, then a new entry is added.

I also replace all instances of

FirstOrDefault with

SingleOrDefault. This will force a clean-up of duplicate entries in the cache database. In Visual Studio open up the mdb file located in the

App_Data folder and clear out entries from the

UserTokenCaches table. Fixing this before the final deploy to Azure and millions of users crowd in seems like a pretty smart move.

Send an e-mail

Now it’s time to use the Outlook API and see if I can actually send an e-mail. On

UserInfo.aspx I drop a button with a click event.

<tr>

<td>Last Name</td>

<td><%#: Item.Surname %></td>

</tr>

</table>

</ItemTemplate>

</asp:FormView>

<asp:Button ID="SubmitBtn" runat="server" Text="Send Mail" OnClick="SubmitBtn_OnClick"></asp:Button>

</asp:Panel>

</asp:Content>

In the code-behind I add the good old check for post backs so as not to re-bind the user information.

protected void Page_Load(object sender, EventArgs e)

{

if (!this.IsPostBack)

{

RegisterAsyncTask(new PageAsyncTask(GetUserData));

}

}

and the code to send the e-mail goes as follows.

private Task SendEmailTask()

{

return Task.Run(async () =>

{

var client =

new OutlookServicesClient(new Uri("https://outlook.office365.com/api/v1.0"),

async () => await TokenHelper.GetTokenForApplicationSilent(TokenHelper.OutlookResourceId));

// Prepare the outlook client with an access token

await client.Me.ExecuteAsync();

var servicePointUri = new Uri(TokenHelper.GraphResourceId);

var tenantId = ClaimsPrincipal.Current.FindFirst("http://schemas.microsoft.com/identity/claims/tenantid").Value;

// Load your profile and retrieve e-mail address - could have been cached on initial page load

var serviceRoot = new Uri(servicePointUri, tenantId);

var activeDirectoryClient = new ActiveDirectoryClient(serviceRoot,

async () => await TokenHelper.GetTokenForApplicationSilent(TokenHelper.GraphResourceId));

var user = activeDirectoryClient.Me.ExecuteAsync().Result;

var body = new ItemBody

{

Content = "<h1>YOU DID IT!!</h1>",

ContentType = BodyType.HTML

};

var toRecipients = new List<Recipient>

{

new Recipient

{

EmailAddress = new EmailAddress {Address = user.Mail}

}

};

var newMessage = new Message

{

Subject = "O365 Mail by Mikael",

Body = body,

ToRecipients = toRecipients,

Importance = Importance.High

};

await client.Me.SendMailAsync(newMessage, true); // true = save a copy in the Sent folder

});

}

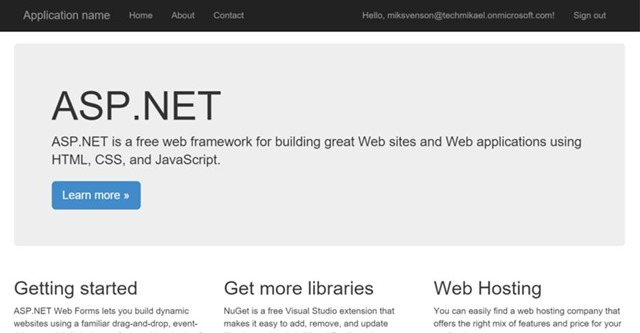

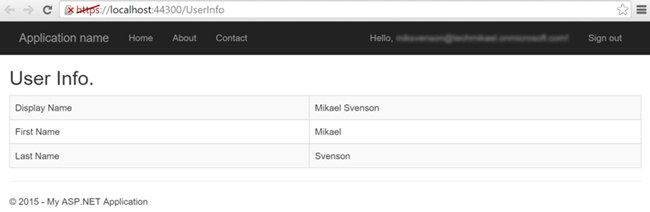

Testing the application and clicking the

Send Email button on the

UserInfo page should now send an e-mail to yourself. As there is no navigation link to the UserInfo page, merely write /UserInfo at the end of your URL to test it out

https://localhost:44300/UserInfo. If all goes to plan you should see an e-mail in the test user’s mailbox.

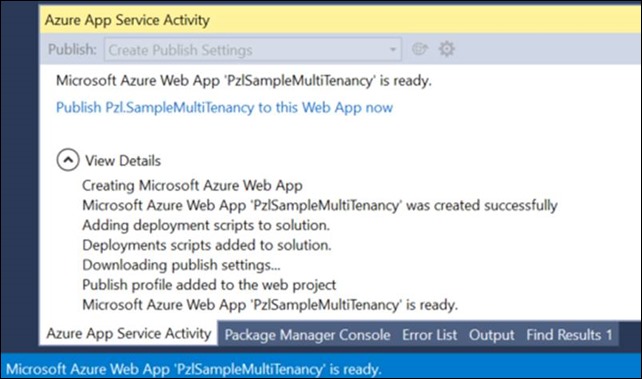

![clip_image002[8] clip_image002[8]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEi3cS9E7fsfgsDqK1KGzytQTHgytYqr-Cny0ApowGwAMhulUraGBL7bGcrmHjwjLZd-LwEa27pmNa9reizxTvusO47zlu_gPWf3K3_mPL8Ah-SUazk7iJ8JckLdnu5HNUqOpZXyV2-A60LS/?imgmax=800)

![clip_image002[7] clip_image002[7]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgjJ5jipiTIyuK1NemzpOtew5D_F9DXZmvSRH09X0CnXVZDZel2yM89SLAu6vcdCnKS2ZcKtofcqBJPsf5ldfAixmZrzvcT0riHVFI8FIgQUgDbkh-FhTspFGzdiP43SAhls5tma4XV_7vL/?imgmax=800)

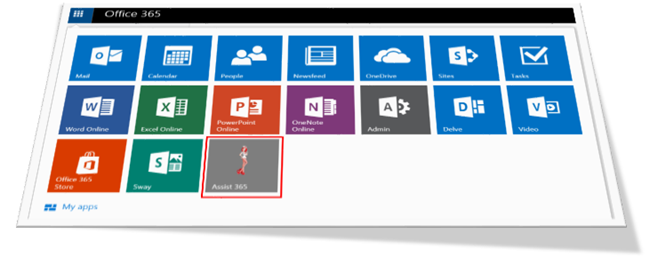

![clip_image004[6] clip_image004[6]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEiqGHulv39P8eds9avU9Jj_bc1WeDVh4YRbthAX9LEbs-s7jlUMMTM_bvVK7iO7-5u7dst6U3SHUp1ScT29T7Vvyd9AtAUbGdE6tq-N-eAyykTWtc0TL-Veegj3IvY-1jiYmuSUAdVOd25q/?imgmax=800)

![clip_image004[8] clip_image004[8]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgoXUGTKgDkhOoEjll6ujGO_F0OIapNiUTpSFjEW6OqZ2mUNqUTrWTFUu3riRSgj-3934Wk6Hy_pNAA2svS9Hvd8Qf4AYrQhRu8FPCuruJ8hWn7_A8LSMRZYupJsPXHMxQSzEoazYqKR5iY/?imgmax=800)

![clip_image002[7] clip_image002[7]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEg0h7_PU16r7o8FfIiSULTMlv0-85fRpKqGUCzAGcqEWMVJTw0TYYeS161CZ4raf8tMp-NidfsVybn1oxdtjIT4ilgNetHdlPA8wS7aeBZBKsjFzxSQgObE8bonNOrWp8vwBuG_i1W34bx-/?imgmax=800)